Which Manual Tasks to Automate First

The Wrong Question Most Teams Ask

What takes the most time?

What frustrates people the most?

What can our tools automate easily?

These questions surface quickly when teams are under pressure. Backlogs are growing. Headcount is constrained. Executives want visible progress. Automation appears to be the fastest way to relieve strain. But automation programs often fail because teams automate the wrong work at the wrong time for the wrong reasons.

In many organizations, automation decisions are driven by pressure. Pressure to:

- reduce headcount dependency

- eliminate visible friction

- demonstrate progress

Under that pressure, manual work becomes an obvious target. If people are touching it, it must be inefficient. If it is inefficient, it must be automated.

That logic is intuitive. It is also consistently wrong.

The best candidates for automation are not the most manual tasks. They are the most stable, well-understood, and repeatable tasks, with clear ownership and predictable outcomes. This brief explains why that distinction matters, how to recognize it in practice, and how experienced teams avoid automating themselves into fragility.

Effort Is Not a Proxy for Automation Suitability

Time spent is not the same as automation suitability. Some tasks take time because they are absorbing variability that the system cannot handle. Frustration is not a reliable signal either. People are often most frustrated by tasks that sit at the boundary between structured systems and messy reality. Those boundaries exist for a reason.

Tool capability is the weakest signal of all. Modern automation platforms can technically automate almost anything. That does not mean they should. When tooling becomes the starting point, automation decisions drift toward what is easy to build instead of what is safe to operate.

This gives rise to a familiar pattern. Teams automate tasks that are visible, painful, and politically rewarding to remove. Those automations then require constant exception handling, manual overrides, and human supervision. Over time, they increase operational risk rather than reducing it.

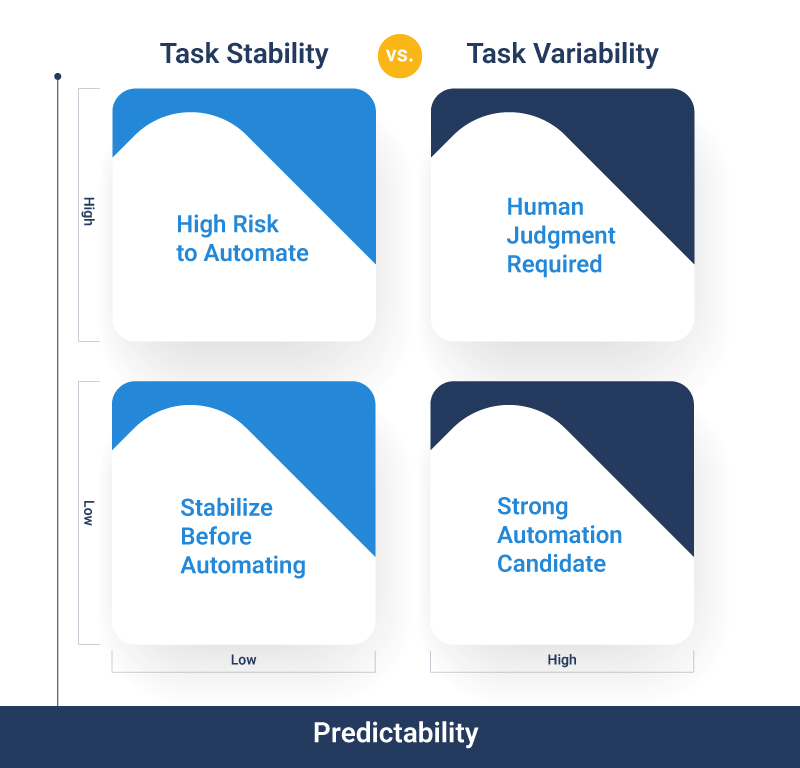

A better question is harder and less satisfying: Which tasks are stable enough to survive automation without supervision?

Manual Does Not Mean Automatable

Many manual tasks exist because the work itself is variable, ambiguous, or risky. At a technical level, manual work often serves one or more of the following purposes.

Automating these tasks too early removes the human safety net. Errors surface later as financial adjustments, customer issues, or audit findings. The work did not disappear. It moved downstream and became harder to see.

Manual effort is often a signal. Treating it solely as a cost leads teams to automate the signal rather than address the cause.

Characteristics of Good Automation Candidates

There is no universal checklist for automation suitability. Context matters. That said, strong automation candidates consistently share a set of conditions. When these conditions are present, automation reduces risk. When they are missing, automation tends to amplify it.

Stable inputs and outputs

Good candidates operate on well-defined, consistently structured inputs. Outputs are predictable and verifiable. When input data varies widely or requires interpretation, automation logic becomes brittle. Small changes upstream will create cascading failures.

Low exception variability

Exceptions exist, but they are rare and well understood. The team can describe them clearly and quantify their frequency. When exception volume is high or poorly understood, automation quickly becomes an exception routing system rather than a throughput engine.

Predictable execution paths

The task follows a consistent sequence of steps. Branching logic is limited and intentional. Tasks with many conditional paths tend to encode process confusion rather than process clarity.

Clear success and failure states

It is obvious when the task completes correctly and equally obvious when it does not. Ambiguous completion criteria force humans back into supervision roles, negating much of the benefit.

Consistent execution frequency

Regularly running tasks are easier to observe, measure, and refine. Infrequent tasks often fail quietly and are discovered only when downstream impacts occur.

Explicit business ownership

Someone owns the outcome, not just the execution. When automation fails, there is no ambiguity about who is accountable. Without ownership, failures linger, and trust erodes.

Measurable baseline performance

The task’s current performance is understood. Error rates, cycle time, and exception frequency are known. Without a baseline, it is impossible to know whether automation improved anything.

Contained blast radius

When failure occurs, the impact is limited. Early automation efforts should fail cheaply. Automating tasks with a wide downstream impact before stability is proven increases organizational risk.

These characteristics are conditions, not requirements. The more of them that are present, the safer automation becomes. The fewer that are present, the more discipline is required before proceeding.

The Maturity Lens: Why Sequencing Matters

Most automation failures are sequencing failures.

Teams automate tasks before they understand them. They automate before measurement and stabilization. Automation then locks in ambiguity and accelerates failure.

A simple sequencing lens helps explain why.

First, observe. Teams need to understand how the task actually runs today, including informal steps and workarounds. Documentation is not enough. Observation reveals where humans intervene and why.

Second, measure. Frequency, exception rates, and failure modes must be visible. Measurement creates a shared reality. Without it, automation decisions are based on anecdote.

Third, stabilize. Inputs, handoffs, and ownership need to be clarified. Stabilization reduces variability and makes outcomes predictable.

Fourth, optimize. Only after stabilization does it make sense to improve efficiency or redesign flow.

Finally, automate. Automation becomes durable when it encodes a stable, understood process rather than compensating for unresolved variability.

When teams skip steps, automation becomes a shortcut around discipline. That shortcut almost always leads back to manual oversight, this time layered on top of automated complexity.

Why Teams Automate the Wrong Tasks First

Poor automation choices are rarely the result of ignorance. They are the result of pressure. Visibility bias plays a major role. Tasks that generate complaints or consume visible effort attract attention. Quiet failures that create downstream cost often do not. Executive pressure compounds this bias. Leaders want visible progress. Automated tasks make good demonstrations, even when they create hidden risk.

Tool-driven roadmaps distort priorities. When platforms promise rapid automation, backlogs form around what is easiest to build, not what is safest to automate.

Quick-win incentives reinforce short-term thinking. Teams are rewarded for delivery, not durability. Long-term operational cost is deferred.

Also, ROI is frequently misinterpreted. Time saved is easy to quantify, but the new risk introduced into your process is not. Automation business cases often overestimate the benefit and underestimate the operational cost.

Each of these pressures pushes teams to automate manual, painful, and unstable tasks. As a result, the downstream effects are predictable.

- Exception volume grows.

- Shadow processes/workarounds emerge.

- Leadership trust declines.

- Automation teams become support teams.

How Experienced Teams Decide What to Automate

Mature teams behave differently, even under the same pressures:

- They separate annoyance from suitability. A task can be frustrating and still be a poor automation candidate.

- They explicitly decide what stays manual. Manual work is not treated as failure. It is treated as intentional control until conditions change.

- They pilot where failure is cheap. Early automation efforts are scoped to minimize blast radius. Learning is prioritized over scale.

- They treat automation as a portfolio decision. Risk is balanced across tasks. Not everything moves at once.

These teams rely on shared understanding, technical discipline, and operational humility.

Discipline Before Automation

To reiterate, automation success is not determined by tooling. It is determined by sequencing, stability, and ownership.

Automating the wrong tasks increases cost and risk. Automating the right tasks at the right time reduces both.

If you want to assess automation readiness within the context of overall ERP health, a structured ERP Health Assessment can help identify where discipline is missing before automation amplifies the gaps.

For teams managing large automation backlogs, this Manual Task Audit Workbook will help you with a more grounded prioritization conversation based on risk, stability, and ownership rather than effort alone.